Bridges Powers Artificial Intelligence that Can Speed Simulations of Neutron Star Mergers and Repeated Simulations that Predict Unique Signal from Unequal Mergers

Collisions between neutron stars involve some of the most extreme physics in the Universe. The intense effects of vast matter density and magnetic fields make them computation-hungry to simulate. To make matters stranger, many of these events result in a black hole that swallows up all but the gravitational evidence of the collision. Projects at the National Center for Supercomputing Applications (NCSA) and at Penn State used PSC’s Bridges supercomputing platform to shed light on these dramatic star crashes. The NCSA project used artificial intelligence on Bridges’ advanced graphics-processing-unit (GPU) nodes to obtain a correction factor that will allow much faster, less detailed simulations to produce accurate predictions of these mergers. The Penn State project used a series of simulations to predict an electromagnetic “bang” when the two merging stars have different masses, which isn’t present when the merging stars’ masses are similar.

Why It’s Important: Improving Simulations

Bizarre objects the size of a city but with more mass than our Sun, neutron stars spew magnetic fields a hundred thousand times stronger than an MRI medical scanner. A teaspoon of neutron-star matter weighs about a billion tons. It stands to reason that when these cosmic bodies smack together it will be dramatic. And nature does not disappoint on that count.

“We don’t know the nature of matter when it is super-compressed. Neutron stars are ideal laboratories to get insights into this state of matter. [Other than black holes] they are the most compact objects in the Universe.”—Shawn Rosofsky, NCSA

Scientists have directly detected two neutron-star mergers to date. These detections depended on two gravitational-wave-detector observatories. LIGO consists of two detectors, one in Hanford, Washington, and the other in Livingston, Louisiana. The European Virgo detector is in Cascina (near Pisa), Italy.

Astronomers would like to see the highest-quality computer simulations of neutron star mergers. This allows them to identify what they should be looking for to better recognize and understand these events. But these simulations are slow and computationally expensive. Graduate student Shawn Rosofsky, working with advisor E. A. Huerta at NCSA at the University of Illinois at Urbana-Champaign, set out to speed up such simulations. To accomplish this, he turned to artificial intelligence using the advanced graphics processing units (GPUs) of the National Science Foundation-funded Bridges supercomputing platform at PSC.

Why It’s Important: Predicting a Bang

When two objects roughly the mass of the sun and the size of cities slam together, it seems strange to talk about how “quiet” it is. But for many neutron-star collisions, it is quiet, at least in terms of radiation we can detect. A strong surge of gravity waves emerges from the impact—now being sensed by gravity-wave detectors such as LIGO, in Hanford, Washington, and Livingston, Louisiana; and Virgo, in Santo Stefano a Macerata, Italy. But precious little else appears. That’s because the incredibly dense collapsed stars combine to form a black hole, which swallows any of the radiation that could have come out of the merger.

But that’s not the only way it can play out.

“Recently, LIGO announced the discovery of one [merger] event in which the two stars were of very different mass … The main consequence in this scenario is that we expect this very characteristic electromagnetic counterpart [to the gravity wave signal].”—David Radice, Penn State

After reporting the first detection of a neutron-star merger in 2017, in 2019, the LIGO team reported the second, which they named GW190425. The first of the two collisions was about what astronomers expected, with a total mass of about 2.7 times the mass of our Sun and each of the two neutron stars about equal in mass. But GW190425 was much heavier—a combined mass of around 3.5 Solar masses—and the ratio of the two participants more unequal—possibly as high as 2 to 1.

That may not seem like such a huge difference. But only a small range of masses is possible for neutron stars. Lighter than about 1.15 times the mass of our Sun, and they don’t collapse and remain regular, dying stars. Heavier than about 2.7 Solar masses, and they collapse straight to a black hole. When the difference between the merging stars gets as large as in GW190425, scientists suspected that the merger could be messier—and louder in electromagnetic radiation. Astronomers had detected no such signal from GW190425’s location. But coverage of that area of the sky by conventional telescopes that day wasn’t good enough to rule it out.

David Radice of Penn State, working as member of CoRe, the Computational Relativity International Collaboration, which includes scientists in the U.S., Germany, Italy and Brazil, wanted to better understand the phenomenon of unequal neutron stars colliding and to predict signatures of such collisions that astronomers could look for. He turned to simulations on PSC’s Bridges supercomputing platform.

How PSC Helped: AI Correction

To pursue his goal of improving neutron-star collision simulations, Rosofsky set out to study the phenomenon of magnetohydrodynamic turbulence in the gasses surrounding neutron stars as they merge. This physical process is related to the turbulence in the atmosphere that produces clouds. But in neutron star mergers, it takes place under massive magnetic fields that make it difficult to simulate in a computer. The scale of the interactions is small—and so detailed—that the high resolutions required to resolve these effects in a single simulation could take years.

“Subgrid modeling [with AI] allows us to mimic the effects of high resolutions in lower-resolution simulations. The time scale of the simulations with high-enough resolutions without subgrid modeling is probably years rather than months. If we [can] obtain accurate results on a grid by artificially lowering the resolution by a factor of eight, we reduce the computational complexity by a factor of eight to the fourth power, or 4,096.”—Shawn Rosofsky, NCSA

Rosofsky wondered whether deep learning, a type of artificial intelligence (AI) that uses multiple layers of representation, could recognize features in the data that allow it to extract correct predictions faster than the brute force of ultra-high resolutions. His idea was to produce a correction factor using the AI to allow lower-resolution, faster computations on conventional, massively parallel supercomputers while still producing accurate results.

Deep learning starts with training, in which the AI analyzes data in which the “right answers” have been labeled by humans. This allows it to extract features from the data that humans might not have recognized but which allow it to predict the correct answers. Next the AI is tested on data without the right answers labeled, to ensure it’s still getting the answers right.

“It takes several months to obtain high-resolution simulations without subgrid scale modeling. Shawn’s idea was, ‘Forget about that, can we solve this problem with AI? Can we capture the physics of magnetohydrodynamics turbulence through data-driven AI modeling?’ … We had no idea whether we would be able to capture these complex physics … But the answer was, ‘Yes!’”—E. A. Huerta, NCSA

Rosofsky designed his deep-learning AI to progress in steps. This allowed him to verify the results at each step and understand how the AI was obtaining its predictions. This is important in deep learning computation, which otherwise could produce a result that researchers might not fully understand and so can’t fully trust.

Rosofsky used the Stampede2 supercomputer at the Texas Advanced Computing Center (TACC) to produce the data to train, validate and test his neural network models. For the training and testing phases of the project, Bridges’ NVIDIA Tesla P100 GPUs, the most advanced available at the time, were ideally suited to the computations. Using Bridges, he was able to obtain a correction factor for the lower resolution simulations much more accurately than with the alternatives. The ability of AI to accurately compute subgrid scale effects with low resolution grids should allow the scientists to perform a large simulation in months rather than years. The NCSA team reported their results in the journal Physical Review D in April 2020.

“What we’re doing here is not just pushing the boundaries of AI. We’re providing a way for other users to optimally utilize their resources.”—E. A. Huerta, NCSA

The AI computations on Bridges showed that the method would work better and faster than gradient models. They also present a roadmap for other researchers to use AI to speed other massive computations.

Future work by the group may include the even more advanced V100 GPU nodes of Bridges-AI, or the upcoming Bridges-2 platform. Their next step will be to incorporate the AI’s correction factors into large-scale simulations of neutron-star mergers and further assess the accuracy of the AI and of the quicker simulations. Their hope is that the new simulations will demonstrate details in neutron-star mergers that can be identified in gravitational wave detectors. These could allow observatories to detect more events, as well as explain more about how these massive and strange cosmic events unfold.

You can read the NCSA team’s paper here.

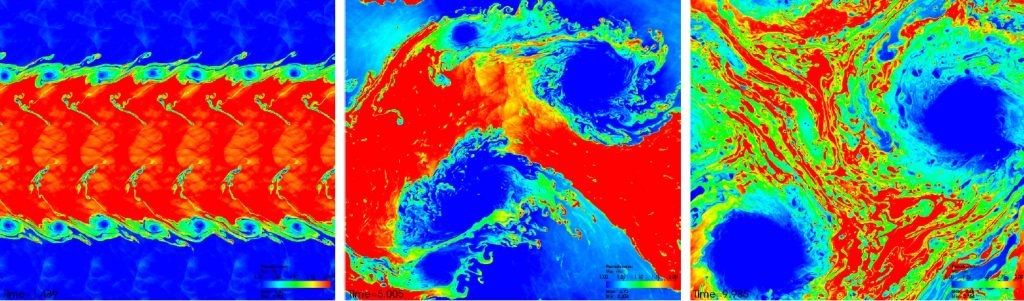

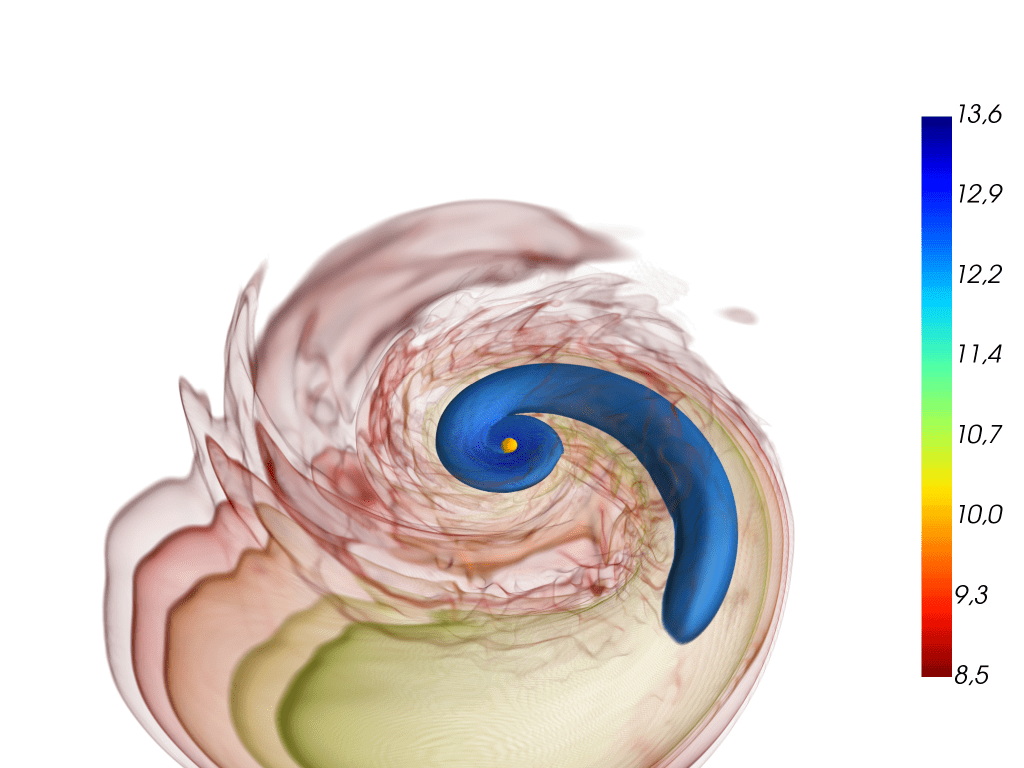

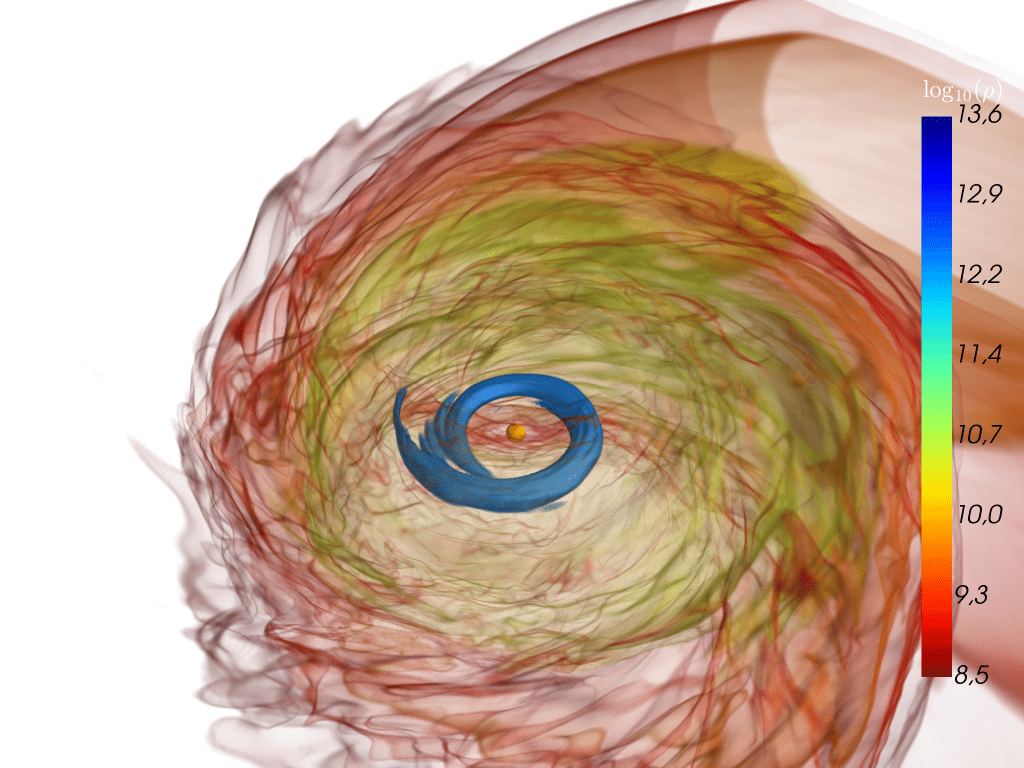

A neutron star is ripped apart by tidal forces from its massive companion in an unequal-mass binary neutron star merger (left). Most of the smaller partner’s mass falls onto the massive star, causing it to collapse and to form a black hole (middle). But some of the material is ejected into space; the rest falls back to form a massive accretion disk around the black hole (right). From Figure 4 in Accretion-induced prompt black hole formation in asymmetric neutron star mergers, dynamical ejecta and kilonova signals. Bernuzzi S et al., Monthly Notices of the Royal Astronomical Society

How PSC Helped: Bringing the Power

To run his simulations of unequal neutron-star mergers, Radice needed an unusual combination of computing speed, large memory and flexibility in moving data between memory and computation. That’s partly because scientists know so little about these mergers for certain. To test their ideas, he needed a significant number of computing cores (about 500), running for long periods (weeks), multiple times (about 20, in the current study). Radice employed a number of systems for this work, but the most useful ones he found were PSC’s Bridges and San Diego Supercomputer Center’s Comet, a partner with PSC in the National Science Foundation’s XSEDE network of supercomputing centers and computers.

“There is a lot of uncertainty surrounding the properties of neutron stars. In order to understand them, we have to simulate many possible models to see which is compatible with astronomical observations. A single simulation of one model would not tell us much; we need many simulations and large computation. We need a combination of high capacity and high capability that only machines like Bridges … can offer. This work would not have been possible without … access to [such] national supercomputing resources.”—David Radice, Penn State

The computations did not disappoint the scientists’ expectations of an electromagnetic bang.

As the two simulated neutron stars spiraled in toward each other, the gravity of the larger star tore its partner apart. That meant the smaller neutron star didn’t hit its more massive companion all at once. The initial dump of the smaller star’s matter turned the larger into a black hole. But the rest of its matter was too far away for the black hole to capture immediately. Instead, the slower rain of matter into the black hole created a flash of electromagnetic radiation.

The group reported their results in the journal Monthly Notices of the Royal Astronomical Society in June 2020. Their hope is that the simulated signature they found can be used by astronomers using a combination of gravity-wave and conventional telescopes to detect the paired signals that would herald the breakup of a smaller neutron star merging with a larger one. You can read their paper here.