The link between the Cray Y-MP and the Connection Machine CM-2 paved the way for high-performancing parallel interfacing between supercomputers. Pictured here, the Cray T3D system is installed, which was connected with a data-transfer link to the Cray C90.

1991 Union of PSC’s CRAY Y-MP and Connection Machine-2 Pioneered Heterogeneous Supercomputing, a Fundamental Approach Today

PSC40: Powering Discovery

2026 marks 40 years of PSC. As we continue on with cutting-edge innovation, we look back on four decades of history in computing, education, and groundbreaking research—and the people who made it happen.

Some problems require you to solve them one step at a time, each step feeding the next — serial processing. Others can be split into many parts, solving each bit at the same time and combining them in one step to get the answer — parallel processing. But many, many problems include a little bit of both. In the early 1990s, PSC found itself with the powerful serial CRAY Y-MP supercomputer and the for-its-time-huge parallel-processing Connection Machine-2 (CM-2). Thanks to know-how from PSC’s networking engineers, a CMU team was able for the first time to link two vastly different supercomputers to tackle complex problems requiring both types of processing. This approach to heterogeneous computing paved the way for modern supercomputing systems.

WHY IT’S IMPORTANT

Today, one of PSC’s greatest strengths is in heterogeneous computing. By that we mean linking together computers, or elements within computers, that are designed to solve different types of problems. A given computation can switch between those elements so that each task runs on the element best suited to do it quickly. PSC’s NSF-funded Bridges-2, along with connected systems such as the AI-optimized Neocortex, are tremendously popular among scientists partly for the reason that they offer number-crunching CPUs, AI-friendly GPUs, large-memory CPUs, and experimental elements like Neocortex’s Wafer Scale Engines, in a single package that juggles it all effectively.

In 1991, heterogeneous computing wasn’t a new idea. But linking supercomputer-class machines so that they could communicate and efficiently trade parts of a calculation had never been done before. PSC found itself in the position to do exactly that. In particular, Greg McRae of CMU and his graduate student, Robert Clay, wanted to see if PSC could combine its supercomputers to tackle a class of real-world problems called assignment problems. These have steps that work better on different types of computers.

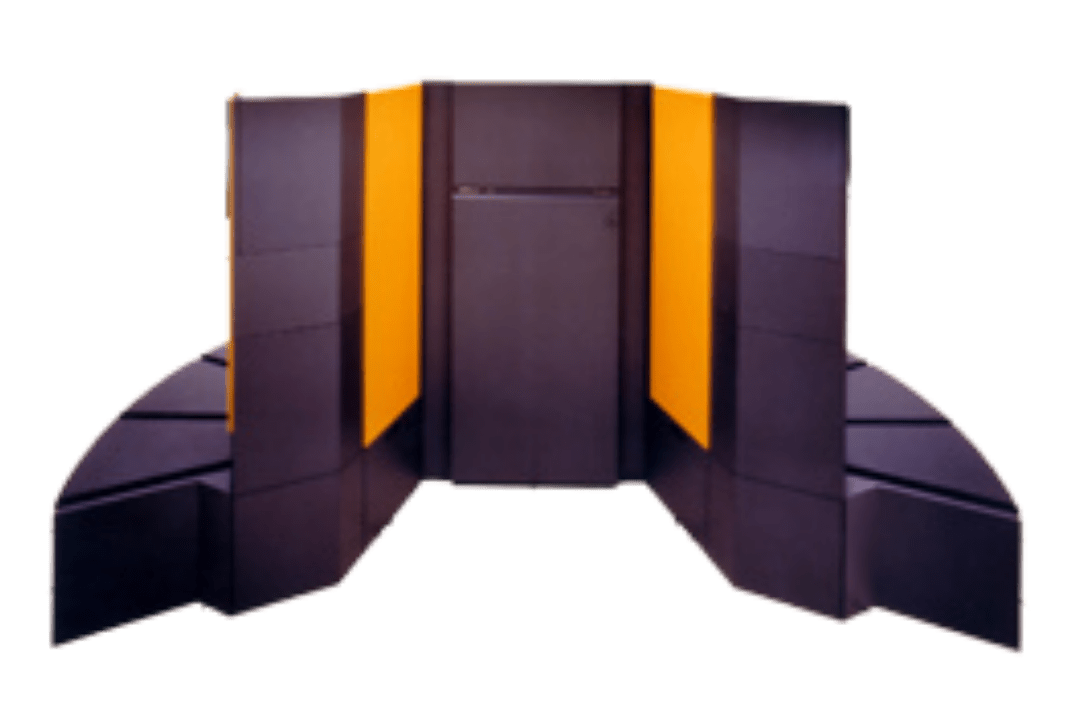

The center’s CRAY Y-MP had a small number of extremely powerful nodes that excelled in doing complex math that requires serial processing. This type of processing is when each step in a calculation depends on the results of the last step — there’s no shortcut, you just have to crunch the numbers quickly.

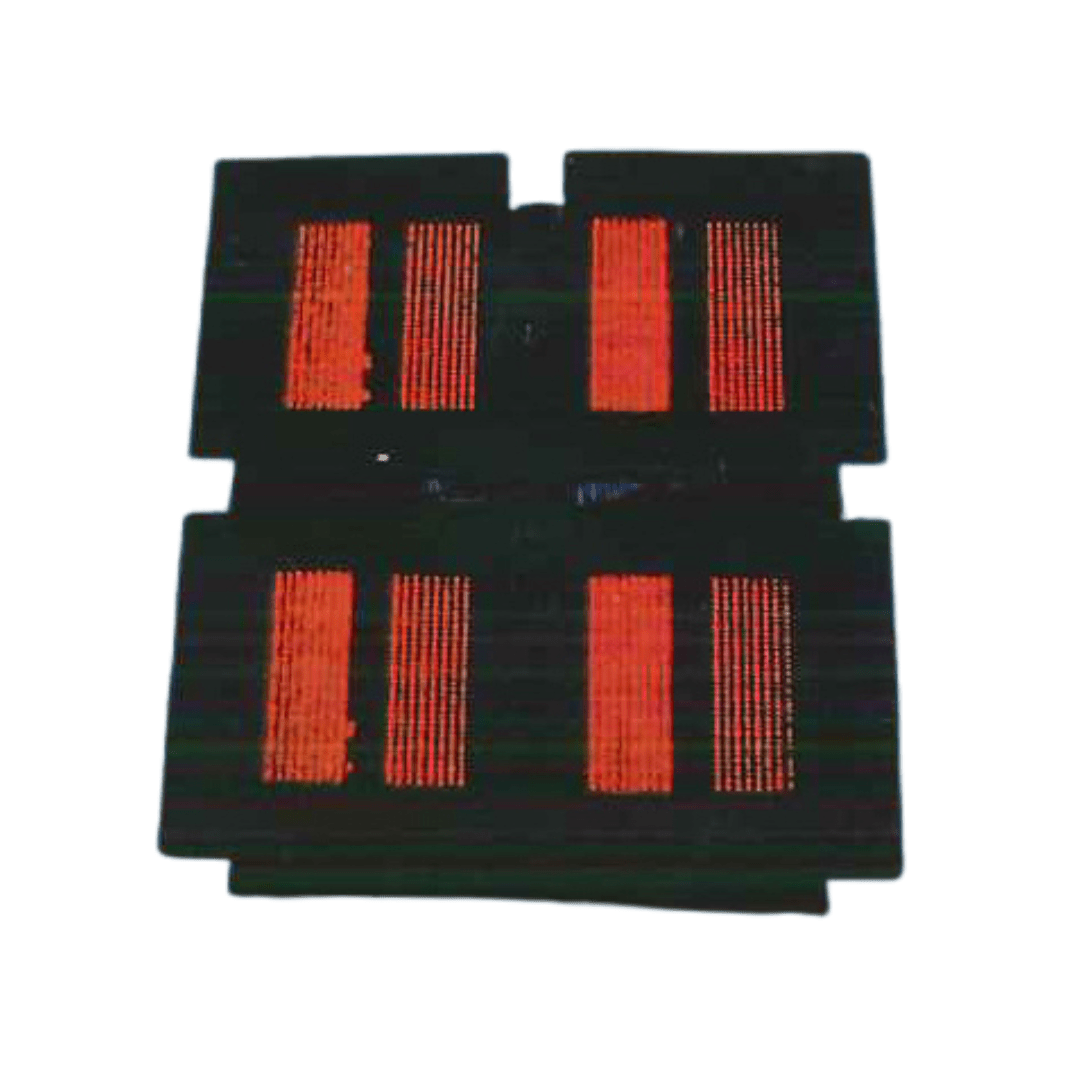

PSC’s CM-2 was a very different animal. Each of its CPUs was, frankly, unremarkable. About as powerful as you could get on a personal computer at the time. But there were 32,000 of them. It was part of the first generation of parallel processing supercomputers, designed for calculations in which the steps are more or less independent of each other and so could be done all at the same time. You pooled their results at the end to get the final answer.

HOW PSC HELPED

Many important problems have elements of both serial and parallel processing. One of these is chemical process modeling. Here you have to plan processing of raw materials through a complex chemical plant with many different elements. It isn’t enough to have each element running at top efficiency. They all have to connect with each other at the right time and place. That way, the finished product isn’t delayed by roadblocks, say when a given process has to wait to start because one of its initial elements isn’t ready yet.

Mathematicians had solved the assignment problem, at least in theory, in the early 20th century. In particular, their approach suggested that the CM-2’s many processors would excel, at least in the earlier parts of the computation.

But this initial step only identifies a family of possible best solutions. The next step, called initial matching, consists of taking that large number of solutions and determining which are optimal. That step is very serial in nature and so ran much more quickly on the Y-MP.

Finally, to complete the solution, the results from the Y-MP had to go back to the CM-2, where a parallel procedure created additional optimal matches, assigning the resources in the chemical plant to meet each other’s demands.

The gist of the problem would be finding ways for the two machines to trade data so that each ran efficiently on their parts of the problem. PSC network engineers Wendy Huntoon and Matt Mathis, along with senior consultant Jamshid Mahdavi, came to the rescue here. For the first time, they linked two supercomputers of radically different design using a high-performance parallel interface. People in the high performance computing community had been talking about such an HiPPI for years; the PSC team made it work.

The success of PSC’s HiPPI showed for the first time that heterogeneous computing wasn’t just an interesting idea. It was a workable solution to a family of problems that included chemical process modeling, assigning airline crew to routes, and other scheduling and routing problems. It also led directly to the great success of systems like today’s Bridges-2.